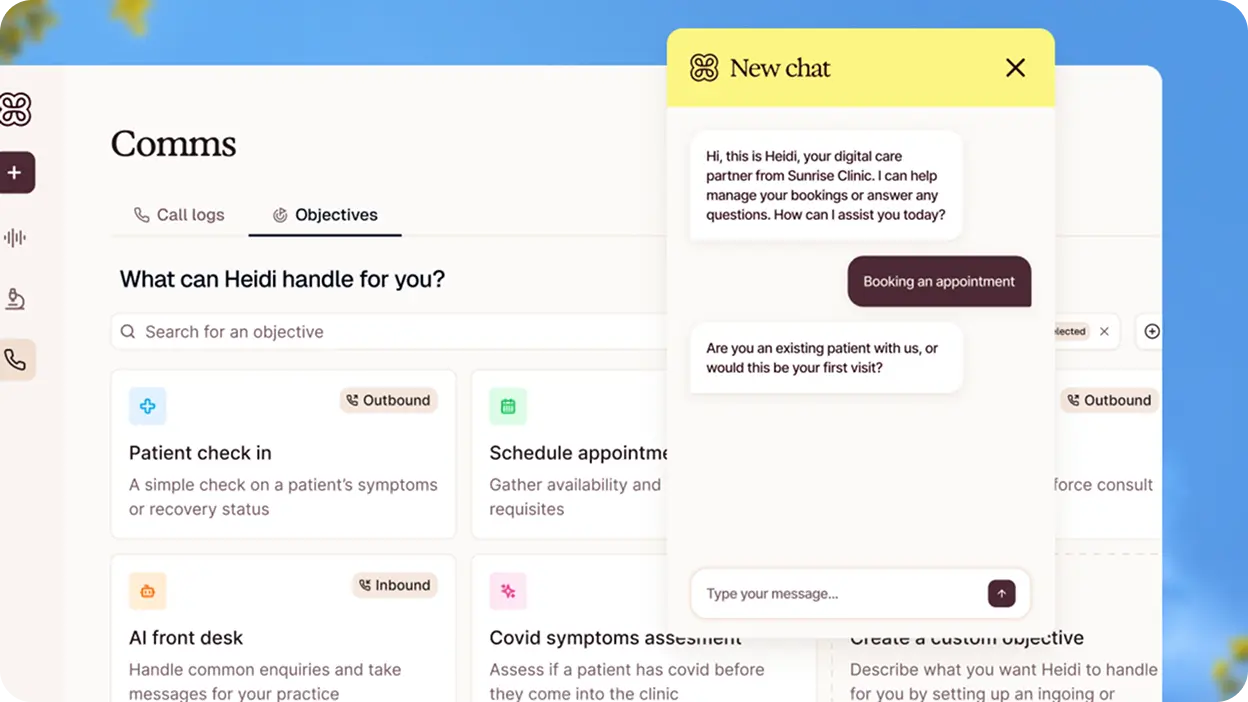

In 18 months, Heidi returned over 18 million hours to frontline clinicians. 73 million consults. 200+ specialties. 116 countries. That’s not traction — that’s a clinician-led movement.

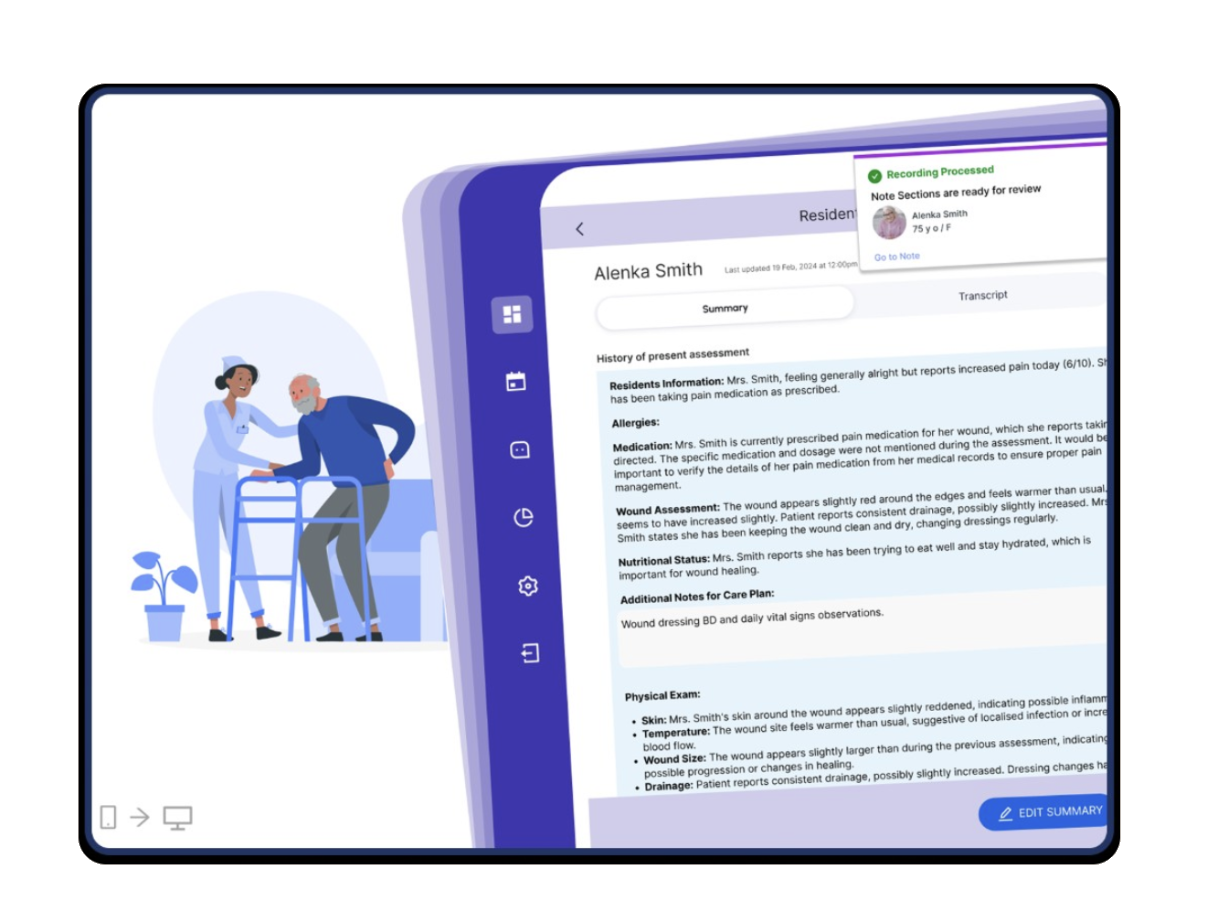

The product-market fit was found through a freemium AI scribe that just works. Now the mission is expanding: from documentation, into Tasks, Evidence, Comms, Ask Heidi, and a brand new 21g wearable — Heidi Remote. From tool to care partner.

That expansion is where the design challenge gets genuinely hard. Because each new surface, each new capability, each new workflow is another moment where the product either deepens clinical trust — or quietly erodes it.