Designing intelligent experiences for HealthTech Governed autonomy, trust, and human oversight

I am designing intelligent experiences across a HealthTech ecosystem spanning doctors, pharmaceuticals, staff, and hospital administration portals. My role is to architect the experience layer for agentic AI — defining outcomes, behaviours, guardrails, and service flows that make the system useful, trustworthy, and operationally effective.

Healthcare AI is moving toward governed autonomy — where traceability, explainability, and human oversight are foundational, not afterthoughts.

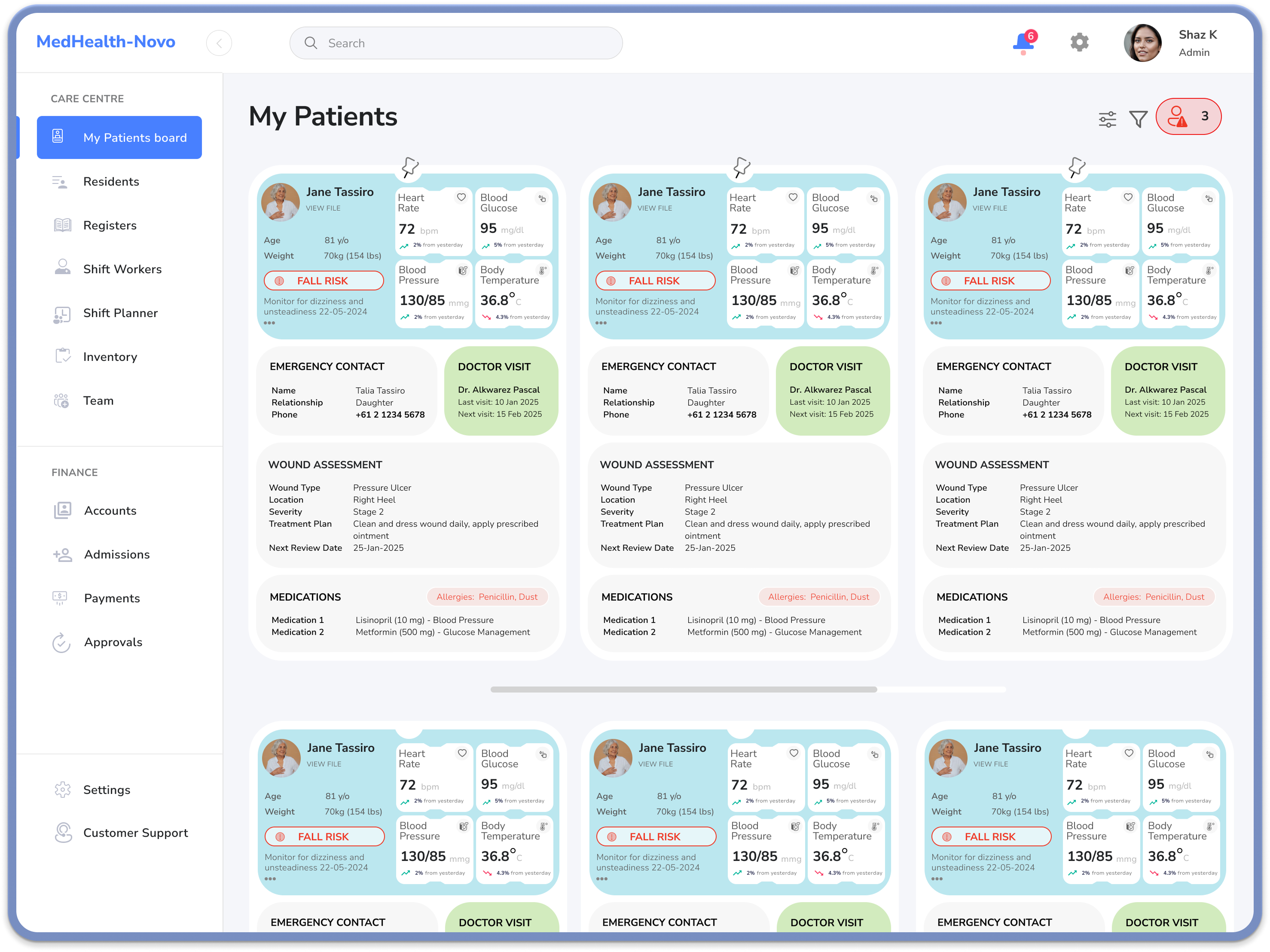

Intelligent experiences across a regulated ecosystem

I work with AI engineers to design intelligent experiences across a HealthTech ecosystem that includes doctors, pharmaceuticals, staff, and hospital admin portals. My role is to shape the experience architecture for agentic AI — defining what the system should do, how it should behave, where humans stay in control, and how trust is maintained across highly regulated workflows. Leaders in this space increasingly emphasise trust, explainability, governance, auditability, and human oversight as core design requirements.

From fragmented tools to delegable, trustworthy intelligence

HealthTech portals often evolve as disconnected tools rather than a coherent service ecosystem — leaving users to juggle fragmented tasks, duplicated steps, and inconsistent decision support.

The opportunity is not simply to add AI features, but to redesign the experience so users can delegate meaningful work to intelligent systems with confidence. That requires treating AI as part of the service model — with explicit guardrails, escalation paths, and measurable performance expectations.

Experience leadership, systems thinking, AI fluency

I bring experience design leadership, systems thinking, and AI fluency to shape how the product should behave. I work closely with engineers, product managers, and data teams to translate user goals into agent responsibilities, service flows, constraints, and operating policies.

My focus is not on coding every solution, but on architecting the experience so the team can build intelligent systems that remain safe, explainable, and useful.

- Define agent responsibilities and orchestration between people, tools, and systems

- Align product behaviour with governance, compliance, and human oversight

- Partner with engineering and data on constraints, policies, and evaluation

Service and agent ecosystem — not isolated screens

I approach the work as a service and agent ecosystem. I map frontstage and backstage interactions, identify human actors and AI agents, and define orchestration between people, tools, and systems. In parallel, I design for consent, transparency, failure states, and escalation — because in healthcare, credibility depends on what happens when the system is uncertain, incomplete, or wrong.

I work in an outcome-led way: from “what screen do they need?” to “what outcome are they trying to achieve, and what should the agent do on their behalf?” Users delegate intent; the system proposes steps, checks constraints, and returns something useful and trustworthy — the core move of agentic UX in a regulated context.

A coherent intelligence layer across portals

Across the ecosystem, the opportunity is intelligent helpers that reduce effort and improve coordination — for example: relevant summaries or next best actions in a doctors portal; retrieval, classification, or routing support in pharmaceuticals; operational coordination and triage for hospital admin. The thread is one intelligence layer across different contexts, so the experience feels consistent, credible, and supportive.

-

Doctors

Decision support and summaries aligned to clinical and operational context.

-

Pharmaceuticals & staff

Faster retrieval, classification, and routing with clear accountability.

-

Hospital admin

Operational coordination and triage with traceable handoffs.

An operating manual — not just a prototype

A strong AI experience in HealthTech needs explicit goals, policies, guardrails, failure modes, escalation paths, and metrics — so we know whether an agent is useful in practice. That includes building trust through governance, aligning with compliance early, and ensuring clear human oversight when risk or ambiguity is high.

With teams, I define behavioural test cases and telemetry so agents can be evaluated like products and systems: task completion, error reduction, time saved, trust, adoption, and the quality of escalation decisions — a feedback loop to improve both experience and intelligence over time.

Experience & operating profile — in practice

A condensed “character sheet” for how we defined an agent: role, capabilities, guardrails, and tone — the kind of artefact that sits alongside human personas when designing agentic health workflows.

AI agent persona

Ava — Clinical Coordination Assistant

Support tool for clinicians and operations: summarisation, risk surfacing, and task coordination across portals — never a decision-maker.

- Human-in-the-loop

- Explainable

- Audit-ready

- Role-tailored

“When in doubt, surface uncertainty and route to a human — don't guess in clinical context.”

Operating profile

Purpose: Summarisation, risk detection, coordination — human approval for consequential actions.

Primary users: Doctors, pharmacists, nurse managers, ops managers.

Positioning: Support tool; avoids anthropomorphic language.

Behavioural posture

-

ProactiveConservative

-

CasualProfessional

-

OpaqueTransparent

-

Speed-firstVerification-first

Core capabilities

- Aggregate relevant patient or operational data into concise, role-specific summaries.

- Highlight risks, anomalies, and potential next best actions.

- Draft tasks, notes, and communications for human review and approval.

- Log decisions, rationales, and overrides for audit.

Guardrails & constraints

- Never finalises a clinical decision; always frames output as recommendation.

- Cannot execute high-risk actions (e.g. changing meds, discharging) without explicit human approval.

- Must show why a recommendation is made — sources, guidelines, or patterns — and flag uncertainty.

- Escalates when confidence is low, data is incomplete, or risk classification is high.

Principles & tone

Behaviour: Conservative in ambiguity — prefers clarification over guessing. Transparent by default, in language clinicians understand. Respectful of time: prioritises and filters to what is most clinically relevant.

Voice: Professional, concise, and neutral. Avoids anthropomorphic framing; clearly positions itself as a support tool, not a peer or authority.

Operating focus

-

ExplainabilityHigh

-

Human oversightHigh

-

Audit & traceabilityHigh

-

Role-specific tailoringHigh

-

Autonomous executionLow

Portal contexts

Escalation sensitivity

How readily Ava routes to a human when signals are weak or risk is elevated.

-

Low model confidence

-

Incomplete data

-

High risk classification

-

Ambiguous user intent

Vision, craft, and operational reality

My value is connecting vision, craft, and operational reality — helping teams move from abstract AI ambitions to an experience architecture that can be built, governed, and scaled responsibly. In regulated HealthTech, outcome-focused design, systems orchestration, and trust-led governance are what turn experimentation into something usable and defensible.

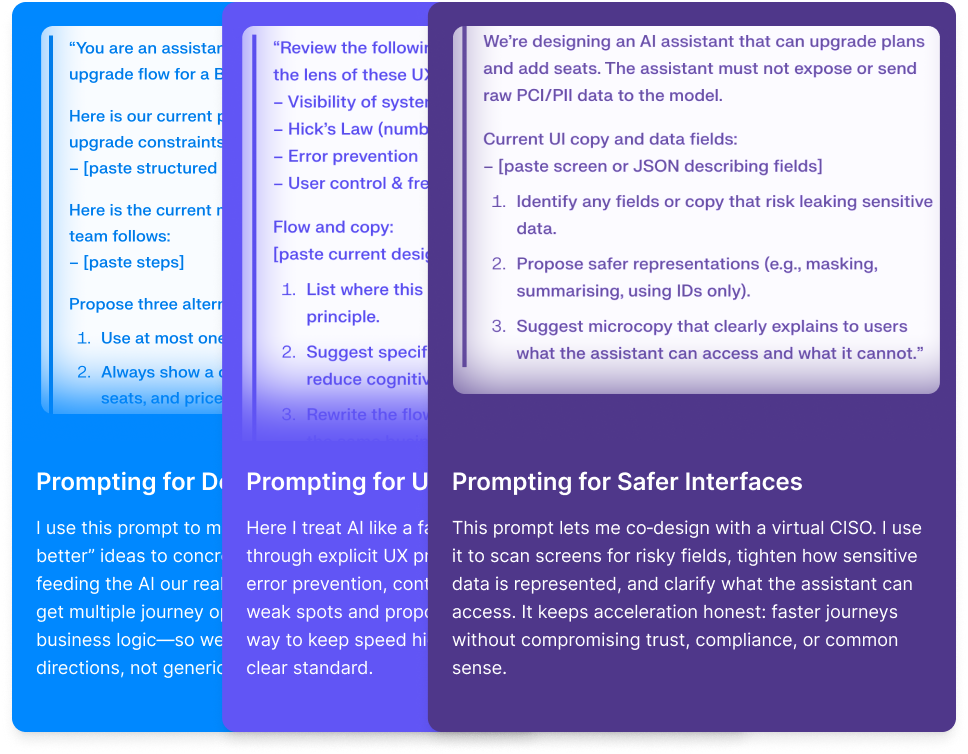

The process we worked through to get there

The work moved in stages — starting with human and AI personas, then journeys and orchestration, explicit rules for agents, UI exploration, and measurement — so trust, governance, and outcomes were intentional, not an afterthought.

-

Step 1

Human personas & AI agent profile

We clarified who we were designing for across doctors, pharma, staff, and admin — and in parallel defined each AI agent: its role, personality, tone of voice, and experience & operating profile (what it may say, do, and escalate), so human and agent behaviour stayed coherent in regulated workflows.

-

Step 2

Journey map

We mapped how a user goal travels across people, systems, and AI agents — so delegation points, handoffs, and risks were visible before we designed features.

-

Step 3

Service blueprint

We laid out frontstage and backstage orchestration — who does what, when, and what the agent may act on — so the service model and AI layer stayed aligned.

-

Step 4

Agent operating manual

We codified goals, policies, guardrails, failure modes, and escalation — so behaviour was inspectable and governable, not implied in UI copy alone.

-

Step 5

Prototype screens

We pressure-tested portal UI and flows in prototypes — clarity, consent, recovery paths, and what “good” looks like when the agent is unsure or wrong.

-

Step 6

Metrics framework

We aligned on how to measure adoption, trust, task success, and operational efficiency — so we could learn in production and improve both experience and intelligence over time.

Building agentic experiences in health? I'd welcome a conversation about governance, service architecture, and design leadership.

Get in touchAgentic AI in regulated environments

If you are shaping intelligent experiences, oversight models, or cross-portal orchestration, let’s talk.

-

Focused on practical outcomes

-

Based in Sydney, working globally